Category: 360 videos

-

Apple Pure Vision and the Immersive Experience Opportunity

Reading Time: 4 minutesMemorable VR experiences AR/VR and XR have been around for years, if not decades. The most unique VR experience I was involved with was people wearing an immersive headset whilst snorkelling in a pool to experience being “weightless” whilst watching an immersive video. The second most interesting video 360 experience was a…

-

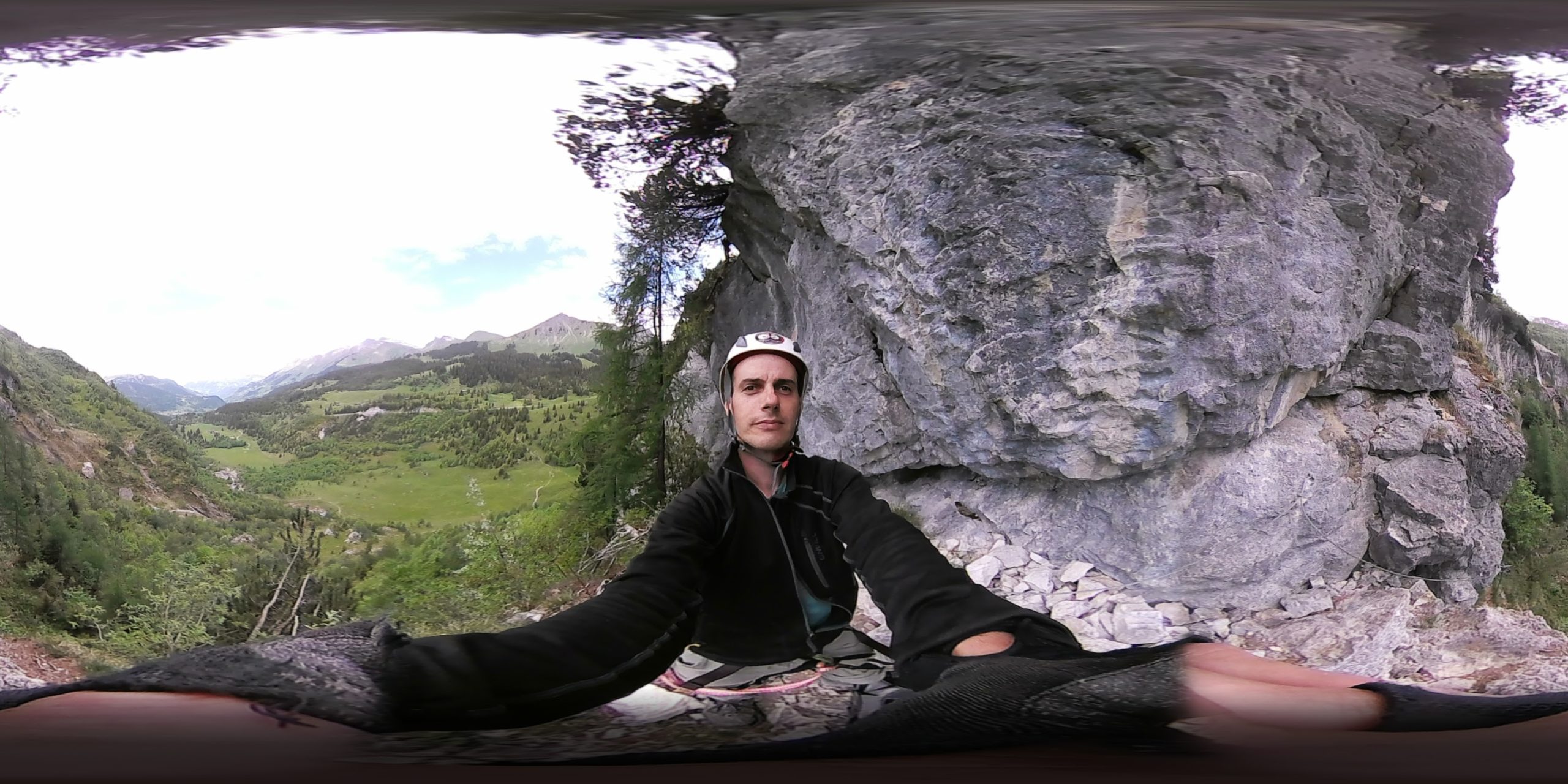

Filming events in 360

Reading Time: 2 minutesWe have all seen events covered by photographers and camera operators but how many events have we seen covered with 360 degree videos? A few weeks ago I filmed the Escalade, wrestling and other events with 360 cameras and it was fun. In some cases it was the opportunity to play with…

-

A continued Interest in 360 Videos

Reading Time: < 1 minuteA few weeks ago I was at the Geneva International Film Festival in Geneva as a volunteer so I got to play and experience new 360 experiences and some of these were interested because we could move around in space whilst others were interesting because of their length. A few years…

-

Sea of Tranquility – Snorkeling VR by Pierre Friquet

Reading Time: 3 minutesDuring the World XR Forum this year in Crans Montana I helped Pierre Friquet with his Sea of Tranquility VR Experience. This VR experience was unique in that it required you to be either in your swimming clothes, your underwear or other. This was a VR experience where you went from being…

-

A 360° cooking Show would be interesting to watch.

Reading Time: 3 minutesFor a few weeks now I have been thinking about how you could make a 360° cooking show. For this video I would like to be able to see the process from an angle where I see the person cooking. I would also like to see all of the ingredients and the…

-

A 360 Video of planes landing – An experiment

Reading Time: 2 minutesI prepared a 360 Video of planes landing at Geneva International Airport yesterday afternoon. Watching planes land, especially when you’re right underneath them just as they’re about to touch down is a lot of fun. You see the lights in the distance and slowly those lights approach. There is a point after which…

-

The Theta+ Video app is available

Reading Time: 2 minutesYesterday the Theta+ Video app came out for Android. The Theta+ video app allows you to trim video clips and then share them to social networks. This means that you no longer need to wait until you get home to prepare content for sharing. You can do it while you sit and have…

-

360 timelpase videos

Reading Time: 3 minutes360 timelapse videos provide us with interesting new opportunities. Imagine for example placing the camera out to see near Weymouth beach and watching as the tide comes towards the camera and then beyond it towards the city. Imagine watching as the sun rises on one side of the Leukerbad Valley and sets…